Anonymous

Doctors will be replaced by artificial intelligence (AI).

For the majority of people, reading this sentence triggers a visceral feeling – “surely that will never happen” or “that’s impossible!”. But it’s not a statement that is simply fanciful, nor is it a statement based on blind techno-optimism. Barring the chance of some event that renders humanity extinct, it is safe to say that technology will continue to progress towards human-level intelligence until it is eventually developed. As Nick Bostrom points out in his seminal text on AI, Superintelligence, it will probably be the last invention that mankind ever makes: like humans to apes, AI has the potential to eclipse human intelligence by many orders of magnitude. Whilst subjective opinion is not always a good metric, estimations by at least 5 different groups of experts in the AI field estimate a 90% probability of human-level AI arriving by 2070.

However, that very question of “when” superintelligent AI will come has been incorrectly answered throughout the ages. The field of AI really began somewhere in the 1940-50s, the very first work done in 1943 about computation using artificial neurons. A two-month, ten-man workshop in Dartmouth on artificial intelligence in 1956 introduced many of AI’s prominent figures to each other, heralding the birth of AI as a separate field from decision theory or mathematics. A quote from H.A. Simon in 1965 reads, “machines will be capable, within twenty years, of doing any work a man can do”, and there was an intense optimism for AI’s capabilities. A dose of reality hit the field for several reasons, one of which was due to fundamental limitations on the basic structures needed for AI (e.g. Perceptrons (1969) proved that artificial “neurons” could not be trained to recognize differences between the information fed into them). The optimism thus came to a halt: in 1973, the Lighthill Report on the state of AI research in England criticized the utter failure of that time’s AI to meet its “grandiose objectives”, resulting in the end of support for AI research in all but two universities.

A second boom happened in the early 1980s, when specific types of AI programs were adopted by corporations, called ‘expert systems’. These were based on the realization that it was not method of processing knowledge itself that would allow AI to work at scale, but instead to use more powerful domain-specific knowledge that allowed for a better way to deal with typically occurring cases in each area of expertise. Such AI programs came to be used by two-thirds of Fortune 1000 companies. MYCIN was one such system in the medical field that was used to identify bacteria causing severe infections, identifying them correctly 69% of the time. Notably, this was actually better than infectious disease specialists, who had achieved a rate of 42.5% to 62.5%. Aggressive work into AI from the Japanese government played a big part in this boom, announcing the 10 year “Fifth Generation” project aimed at building intelligent computers. Other countries followed in their investment, and the AI industry boomed from a few million to multiple billion dollars.

The market for AI hardware had, however, collapsed in 1987 when specialized machines made for it had started to be eclipsed by the like of desktop computers from Apple and IBM. This led to the second AI winter, with more brutal cuts to AI funding. However, after that, algorithms developed by AI researchers began to appear in technological solutions, and a combination of factors – exponential increases in computing power, the realization that previously unrelated fields such as mathematics and economics solved many of AI’s problems, and new paradigms in AI – had turned AI around, bring it to new heights of success. In 1997, the computer Deep Blue beat world chess champion Garry Kasparov; in 2011, IBM’s AI ‘Watson’ beat the two greatest Jeopardy! champions; more recently, Google’s AI AlphaGo famously defeated Lee Sedol in a game of Go, a game so complex and intuitive it was thought impossible to win by computers.

So, now that the history is out of the way – what do we mean by AI, exactly? AI aims to create an entity that can perceive and act like a human.[1] AI is an umbrella term for a large number of fields that endeavor to emulate human faculties. Such subfields include computer vision (allowing computers to “see”), natural language processing (allowing language to “speak” and be “understood”) and machine learning (giving computers the ability to learn without being explicitly programmed). Bits of AI actually exist in healthcare already: old examples include the clinical support system DXplain which assists as a diagnostic tool, or PUFF which automatically interprets pulmonary function tests. There are a growing number of organizations which use AI to analyze medical data and give recommendations: IBM’s Watson, for example, scans the vast array of literature available to oncologists and recommends a personalized chemotherapy regime for cancer patients. Even if you’re not aware of it, AI powers many tools you use today, from Google’s search engine results to Facebook’s face recognition software to the auto-correction capabilities of the iPhone.[2]

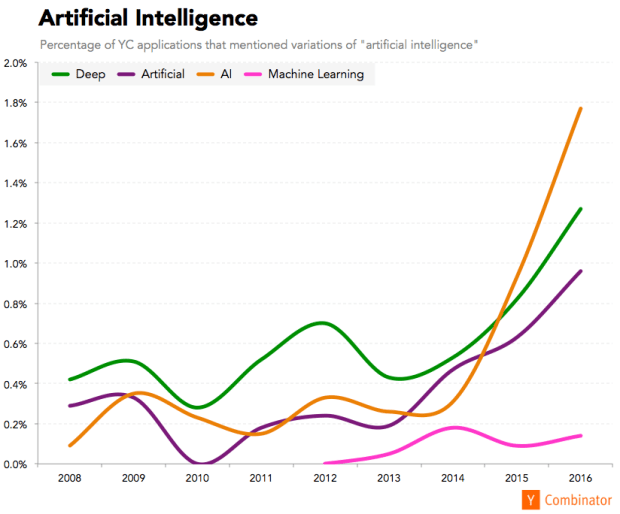

The AI boom is already happening, something that is reflected in the rapid growth of AI education. Google and Facebook are open-sourcing much of their AI research and tools. Massively Open Online Courseware (MOOC) websites such as Udacity and Coursera give free education from some of AI’s leading experts; Universities such as Stanford and MIT have also published their courses online. OpenAI, a non-profit research organization backed by Elon Musk (Tesla, SpaceX) and Sam Altman (Y Combinator), has even arisen out of the need to distribute leading AI research to the public so as to try to prevent an intellectual monopoly from concentrating too densely within a single organization. If you have a look at what’s happening in the technological mecca Silicon Valley, there was a 127% increase from 2015 to 2016 of startups that utilized artificial intelligence. There has also been extreme demand for all the AI talent the world can get: one American university succumbed seven of its AI-related professors to Google alone.

There seem to be several fallacious arguments as to why people think AI won’t replace doctors.

Some are in part culturally driven and emotion based: “people will always want a human doctor’s care, not that of a robot”. Emotion-based arguments like these are prone to wild subjectivity, however, which is itself based on one’s own cultural environment and experiences – it’s a personal choice that is not appropriately generalized to an entire population of patients. One thing that is universally generalizable (except to those of a self-destructive disposition) is that patients desire better health outcomes rather than kindness alone. This is seen in the occasional sentiment that “I’d rather get a doctor that knows what he’s doing” rather than the alternative kinder, yet less competent, professional. In addition, people greatly underestimate their ability to be emotionally influenced by artificial objects: computer generated films of Pixar fame pull at people’s heartstrings, and there is no reason to suggest that one may not feel “warmth” or “humanness” from a sufficiently intelligent and well-presented hologram with an artificial mind.

Often healthcare professionals believe in the “art of Medicine” as if it was some exquisitely complex task beyond the reach of AI’s talons. It is true that it is unlikely that healthcare professionals would simply be instantly displaced by robots: the significantly more likely scenario – a slight glimpse of which might be seen in today’s increasing use of electronic medical records – is the incremental digitization of healthcare. Digitization – which will be more aptly utilized and accepted for each new generation of doctor – is useful if automation can both feed information into electronic systems and also process that information into something clinically useful. The fields of natural language, machine learning, and robotics in particular will need further development until traditionally human tasks, such as taking a history and examination, are automated and digitized without additional strain on the clinician’s workflow. Once there is enough data, however, the ability of AI to “reason” and predict patterns without being explicitly programmed to will most likely have advanced significantly. The “art of Medicine”, too, will actually be handled by AI with much better precision: two huge advantages include an ironclad permanence of memory, and sheer exposure to several consultants’ lives worth of clinical presentations. A concrete example includes Enlitic, an AI healthcare company, which detected lung cancer nodules in chest CT images 50% more accurately than an expert panel of radiologists. It may be argued that pattern recognition is not complex as taking a patient’s history, but one must not forget that these remarkable results represent the current state of affairs in a field that continues to grow rapidly.

AI in healthcare is a huge topic – too big for one single article to cover expansively. But the concept of nearly complete automation of a career that defines our future – it is all at once intimidating, exciting, and requires great deliberation. This idea may be perceived through a lens which actually colors it as a threat to our jobs. A more adaptive approach, though, would be to start thinking about how best to prepare for and contribute to it. Like endocrinology or neurology, it may arise as a specialty in itself. To repeat the statistic at the start of this article: living for another fifty years puts us into “90% probability of human-level machine intelligence” territory. Even if that exact number is off, the true probability is most likely high enough to incite necessary action – there are a lot of intermediary steps before reaching that stage. This is perhaps a rare moment at the beginning of an epoch that will genuinely – and not just in a buzzword sense – change medicine forever. We will be the ones to engineer exactly how it does.

[1] Unfortunately, this is a simplified definition, and also slightly incorrect. To define AI properly, there’s four main categories into what AI can by definition do: thinking humanly, thinking rationally (towards yielding the best, rather than most human, outcomes), acting humanly, and acting rationally. For the purposes of this article, “emulating human faculties” is probably sufficient.

[2] To quote the lament of John McCarthy, one of the “fathers” of AI and instigators of the Dartmouth workshop: “As soon as it works, no-one calls it AI anymore.”

Photo of IBM’s Watson by Raysonho at Wikimedia Commons. Graph sourced from ‘The Startup Zeitgeist’ by Jared Friedman, available at The Macro.